Note: this post was written for Lean 3; the latest version, Lean 4, is a very different language.

Turn back the clock to 2009: a confused physics major newly infatuated with math and computer science, I enrolled in MATH 273: Numbers and Proofs at the University of Calgary. This wasn’t my first encounter with mathematical proof; in first-year calculus I’d mastered rote regurgitation of delta-epsilon proofs. Despite writing out several dozen, their meaning never progressed beyond a sort of incantation I can summon to this day (for every \( \epsilon > 0 \) there exists a \( \delta > 0 \) such that…). We were told on the first day of MATH 273 that the purpose of proof is to compel belief. This was a bright start but sadly marked the beginning of a deeply embarrassing semester reaching its nadir when I asked the professor “how do we prove a definition” fully two-thirds of the way through the course. I got a B-.

My understanding of proofs improved over the course of the next decade, but I don’t think I really got them until encountering the Lean theorem prover this past year. With Lean you have your hypotheses, you have your proof goal (the thing you’re trying to prove), and you have a set of moves you can make. It’s very much like a game; in fact the best introduction to Lean is The Natural Number Game, which is the best puzzle game of any type I’ve played in years. Before I learned Lean, writing proofs was like playing chess without knowing that bishops existed or how knights moved and thinking pawns could teleport around the board. Just knowing the rules, knowing what constitutes a valid move and knowing the space (or at least more of the space) of valid moves, was so powerful for my understanding of how to write a proof. Writing proofs became like navigating an endlessly fascinating maze, using theorems to hop from place to place until finding the conclusion!

I wanted to re-examine some MATH 273 assignments in light of my newfound proof powers. One candidate came easily to mind - the closed-form equation for the sum of the first \(n\) natural numbers:

$$ \sum_{k = 0}^n k = \frac{n \cdot (n + 1)}{2} $$

This seemingly arbitrary equation has an intuitive explanation. Consider a list of all the numbers in the sum, from \(1\) to \(n\) (exclude 0 because it doesn’t change the result). Pair up the numbers on the outside edges of the list, moving inward: \(1\) and \(n\), \(2\) and \(n - 1\), \(3\) and \(n - 2\), etc. In cases where the number in the middle of the list lacks a pair, put it to the side:

| \(n\) | List of Numbers | Pairs | Remainder |

|---|---|---|---|

| \(0\) | \(\{\}\) | \(\{\}\) | |

| \(1\) | \(\{1\}\) | \(\{\}\) | 1 |

| \(2\) | \(\{1, 2\}\) | \(\{(1, 2)\}\) | |

| \(3\) | \(\{1, 2, 3\}\) | \(\{(1, 3)\}\) | 2 |

| \(4\) | \(\{1, 2, 3, 4\}\) | \(\{(1, 4), (2, 3)\}\) | |

| \(5\) | \(\{1, 2, 3, 4, 5\}\) | \(\{(1, 5), (2, 4)\}\) | 3 |

| \(6\) | \(\{1, 2, 3, 4, 5, 6\}\) | \(\{(1, 6), (2, 5), (3, 4)\}\) | |

| \(7\) | \(\{1, 2, 3, 4, 5, 6, 7\}\) | \(\{(1, 7), (2, 6), (3, 5)\}\) | 4 |

| \(\ldots\) | \(\ldots\) | \(\ldots\) |

If you add the numbers in each pair together you’ll always get \(n + 1\), and if \(n\) is even then there are \(n/2\) such pairs - voila! Things are a bit more complicated when \(n\) is odd; there are \((n - 1)/2\) pairs, each summing to \(n + 1\), with a single remainder \((n + 1)/2\):

$$ \frac{n - 1}{2} \cdot (n + 1) + \frac{n + 1}{2} $$ $$ \frac{(n - 1) \cdot (n + 1) + (n + 1)}{2} $$ $$ \frac{((n - 1) + 1) \cdot (n + 1)}{2} $$ $$ \frac{n \cdot (n + 1)}{2} $$

This chain of reasoning might compel belief in our soft human brains, but things have changed and that’s no longer sufficient for the turbulent times in which we live. What would a computer say?

Reasoning about numbers like a computer

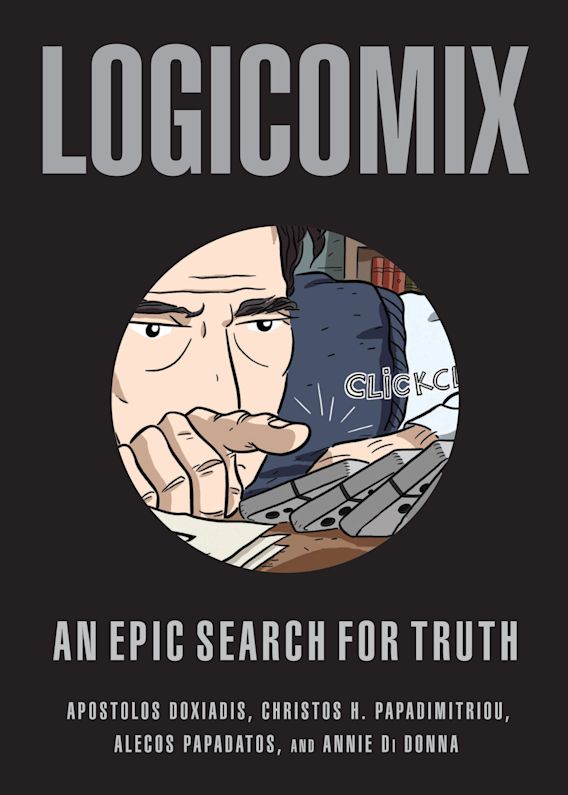

You may or may not know the grand tale of how in the early 20th century logicians such as Bertrand Russell (and many others) fought to put all of mathematics on a solid axiomatic basis. The idea was to have a fairly small number of simple axioms - things which are just assumed to be true - and build up all of mathematics following logically from this foundation. This, basically, is how computers understand mathematics.

We don’t need to delve very deeply into this topic beyond explaining how the natural numbers are defined axiomatically (they were actually formalized two centuries earlier and called The Peano Axioms). There are nine axioms, but the ones we’re interested in are:

- There is a natural number, \(0\)

- There is a successor function, \(S\), where \(S(n)\) is the number after \(n\) (basically \(n + 1\))

In this formalization every natural number is just some number of nested successor functions applied to zero. For example:

| Conventional notation | Peano notation |

|---|---|

| \(0\) | \(0\) |

| \(1\) | \(S(0)\) |

| \(2\) | \(S(S(0))\) |

| \(3\) | \(S(S(S(0)))\) |

| \(4\) | \(S(S(S(S(0))))\) |

| \(\ldots\) | \(\ldots\) |

This is somewhat similar to counting in unary. We also need to define addition; this is done recursively with a base case and general case:

- Base case: \(n + 0 = n\)

- General case: \(n + S(m) = S(n + m)\)

It’s difficult to see how this defines addition; here’s how it works when calculating \(1 + 2\):

| Recursion level | Value |

|---|---|

| \(0\) | \(S(0) + S(S(0))\) |

| \(1\) | \(S(S(0) + S(0))\) |

| \(2\) | \(S(S(S(0) + 0))\) |

| \(3\) | \(S(S(S(0)))\) |

The final ingredient we need is mathematical induction, which is a tactic used to prove that a certain proposition \(P\) holds for all natural numbers. It suffices to show that:

- Base case: \(P(0)\) is true

- Inductive case: assuming \(P(n)\) is true, \(P(S(n))\) is true

The usual analogy here is an infinite line of dominos: if you knock down the first domino (show the base case holds) and know that each domino will knock down the domino after it (show the inductive case holds), then you know that all dominos will be knocked down (the proposition will hold for all natural numbers).

With these primitive tools we can use computers to construct many interesting theorems about the natural numbers!

Stating the problem in Lean

To follow along in this section, you can use the Lean web editor or create a new Lean project on your own computer.

Recall the theorem we want to prove:

$$ \sum_{k = 0}^n k = \frac{n \cdot (n + 1)}{2} $$

It turns out that integer division is annoyingly difficult to reason about, so let’s rewrite this as follows:

$$ 2 \cdot \sum_{k = 0}^n k = n \cdot (n + 1) $$

The first thing we want to do in Lean is recursively define the sum of the first \(n\) natural numbers (you can think of this as the left-hand-side of the equation):

def sum_of_first_n_nat : ℕ → ℕ

| 0 := 0

| (nat.succ n) := (nat.succ n) + sum_of_first_n_nat n

In Lean, the symbol ℕ (for the natural numbers) is expressed with \nat.

The successor function \(S\) is defined as nat.succ.

We’ve defined here a function which takes an \(x \in \mathbb{N}\) and returns the sum of all the natural numbers from \(0\) to \(x\).

The | character pattern-matches on the value of \(x\); the top line is the base case and the bottom line is the general case.

You can test this function as follows (place your cursor at the end of the line to see the result in the Lean goal window):

#eval sum_of_first_n_nat 4

Now for the main event! Let’s write our theorem in Lean, then prove it:

theorem closed_eq_sum_of_first_n_nat (n : ℕ) :

2 * (sum_of_first_n_nat n) = n * (nat.succ n) :=

begin

end

Putting your cursor between the begin and end markers will bring up the current proof goal in the Lean goal window.

The base case

The ⊢ character denotes the thing we are currently trying to prove; we’re going to prove it inductively. Type:

induction n with d hd,

The Lean proof window will now show the following two goals, the base case and the inductive case:

2 goals

case nat.zero

⊢ 2 * sum_of_first_n_nat 0 = 0 * 1

case nat.succ

d : ℕ,

hd : 2 * sum_of_first_n_nat d = d * nat.succ d

⊢ 2 * sum_of_first_n_nat (nat.succ d) = nat.succ d * nat.succ (nat.succ d)

We will now make use of the workhorse rewrite tactic, rw.

This tactic modifies our proof goal according to an existing definition or equation.

Here, we can rewrite sum_of_first_n_nat 0 to its value by definition (0):

rw sum_of_first_n_nat,

By default Lean applies all statements to the topmost proof goal, so our base case will be rewritten as:

case nat.zero

⊢ 2 * 0 = 0 * 1

We can now make use of the basic theorems nat.mul_zero, which says n * 0 = 0, and nat.zero_mul, which says 0 * n = 0:

rw nat.mul_zero,

rw nat.zero_mul,

This proves the base case!

The inductive case

Only the inductive case remains:

case nat.succ

d : ℕ,

hd : 2 * sum_of_first_n_nat d = d * nat.succ d

⊢ 2 * sum_of_first_n_nat (nat.succ d) = nat.succ d * nat.succ (nat.succ d)

It’s worth taking some time to break down what everything means here.

d and hd are our hypotheses: things which we are assuming for the purpose of proving the conclusion, denoted by ⊢.

We can use our hypotheses in rewrites of the conclusion.

Similar to the base case, we’ll first rewrite this expression according to the definition of the general case of sum_of_first_n_nat:

rw sum_of_first_n_nat,

This transforms our proof goal to:

d : ℕ,

hd : 2 * sum_of_first_n_nat d = d * nat.succ d

⊢ 2 * (nat.succ d + sum_of_first_n_nat d) = nat.succ d * nat.succ (nat.succ d)

We now want to multiply out 2, with the aim of being able to rewrite our proof goal with our inductive hypothesis hd.

Use the nat.left_distrib theorem:

rw nat.left_distrib,

Now we have:

d : ℕ,

hd : 2 * sum_of_first_n_nat d = d * nat.succ d

⊢ 2 * nat.succ d + 2 * sum_of_first_n_nat d = nat.succ d * nat.succ (nat.succ d)

We can now rewrite 2 * sum_of_first_n_nat d in the proof goal using our inductive hypothesis hd!

rw hd,

to get:

⊢ 2 * nat.succ d + d * nat.succ d = nat.succ d * nat.succ (nat.succ d)

At this point, these are clearly equal; we just need some simple algebraic manipulations to prove it. To save space I’ll do them all in one shot, with comments.

--rewrites nat.succ n to n + 1:

rw nat.succ_eq_add_one,

--rewrites nat.succ (n + m) to n + nat.succ m (from defn of addition)

--note rw usually rewrites from left to right over an equality; ← (\l) does right to left

rw ← nat.add_succ,

--rewrites nat.succ 1 to 2 (for clarity)

rw (show nat.succ 1 = 2, by refl),

--multiplies out d + 1

rw left_distrib (d + 1) d 2,

--moving things around with commutativity

rw mul_comm 2 (d + 1),

rw mul_comm d (d + 1),

rw add_comm,

And we’re done!

Our proof is formally verified in Lean!

In case you lost track at some point, here is a link to the Lean web editor with the full proof (move your cursor to the end of each line to see the proof state at that position).

Now, some of you might be rolling your eyes at the last section.

Do we really want to be wasting all this time multiplying things out line by line like we’re in grade school?

Fortunately Lean provides some more advanced tactics which take care of the busywork.

The entire final sequence of commands after rw hd can be replaced with just one tactic: ring.

This tells Lean to intelligently search through the basic equalities underpinning the natural numbers in an attempt to make both sides of the equation equal each other.

It’s part of the community-maintained MathLib, and can be accessed by putting import tactic at the top of your .lean file. This extensibility with powerful tactics is one of Lean’s great features.

In the end

If this exercise appealed to you, you’ll greatly enjoy The Natural Number Game, a gamified introduction to Lean. I myself first heard about Lean from this excellent lecture by Dr. Kevin Buzzard of Imperial College London:

You can see the Hacker News comments and Lobste.rs comments for interesting discussion.I believe learning Lean has brought great clarity to my understanding of mathematical proofs. In a way it’s like the perfect scratch pad, focusing your mind on the goal while keeping track of all your assumptions and checking your thinking. Maybe I would’ve had an easier time learning proofs if I’d used Lean in the first place, although I have no way of knowing.

For now, I’m hoping to use Lean to formalize some results in quantum information processing. Maybe once coverage of existing theorems becomes great enough, my work will be of use to real QIP theoreticians. The idea of Lean being the basis for a mathematical search engine is also particularly interesting. A nice way for people who know more about computer science than math, like myself, to play a small part in the development of the field.